- Role

Computational Methods for Data Analysis

AMATH 582 - MWF 8:30-9:20, Lowe 216

AMATH 482 - MWF 9:30-10:20, MEB 246- Instruction

Professor J. Nathan Kutz

- kutz (at) uw.edu

- 206-685-3029, Lewis 118

- -

- Teaching Assistant: Sritam Kethireddy and Jize Zhang

- amath582 (at) uw.edu

- Office Hours (Lewis Hall 115): M 12:30-3:00, Tu 1:30-4:00, W 12:30-3:00, Th 2:30-5:00

- EDGE (section 582B/C only) : M 4:00-6:00

- Lectures and Homework

Video Lectures: View

Make Up Lectures (1 lecture): Principal Component Analysis (PCA) Video

Make Up Lectures (3 lectures): Clustering & Classification Videos

Make Up Lectures (4 lectures): Reduced Order Modeling Videos

- Course Notes: 582notes.pdf or book: Amazon

- Homework: HW 1, HW 2 (cam1_1.mat, cam2_1.mat, cam3_1.mat, cam1_2.mat, cam2_2.mat, cam3_2.mat, cam1_3.mat, cam2_3.mat, cam3_3.mat, cam1_4.mat, cam2_4.mat, cam3_4.mat, HW 3)

- MATLAB: in person or remotely at ICL OR Student Edition (recommended if you do not have access)

- MATLAB: in person or remotely at ICL OR Student Edition (recommended if you do not have access)

- Agenda for Lectures and Notes

- 1/3 Lecture 1: Notes 14.1, Book 15.1

- 1/5 NO CLASS (make up lecture) Book 15.3

- 1/8 Lecture 2: Notes 14.3, Book 15.2

- 1/10 NO CLASS (make up lecture)

- 1/12 Lecture 3: Notes 14.4, Book 15.4

- 1/15 MLK

- 1/17 Lecture 4: Notes 14.5, Book 15.5

- 1/19 Lecture 5: Notes 14.5, Book 15.5

- 1/22 Lecture 6: Notes 14.6, Book 15.6

- 1/24 NO CLASS (make up lecture)

- 1/26 NO CLASS (make up lecture)

- 1/29 Lecture 7: Notes 15.1, Book 16.1

- 1/31 Lecture 8: Notes 15.2, Book 16.2

- 2/2 Lecture 9: Notes 17.1, Book 18.1

- 2/5 Lecture 10: Notes 17.2, Book 18.2

- 2/7 Lecture 11: Notes XX, Book 20.1

- 2/9 Lecture 12: Notex XX, Book 20.2

- 2/12 Lecture 13: SINDy + PDE-FIND

- 2/14 Lecture 14: SINDy + PDE-FIND

- 2/16 Lecture 15: SINDy + PDE-FIND

- 2/29 President's Day

- 2/21 NO CLASS (make up lecture)

- 2/23 NO CLASS (make up lecture)

- 2/26 Lecture 16: deep neural nets

- 2/28 Lecture 17: deep neural nets

- 3/2 Lecture 18: deep neural nets

- 3/5 Lecture 19: deep neural nets

- 3/7 Lecture 20: deep neural nets

- 3/9 NO CLASS (make up lecture)

- Prerequisites

Solid background in Linear Algebra and ODEs and familiarity with MATLAB, or permission.

- Course Description

-

Exploratory and objective data analysis methods applied to the physical, engineering, and biological sciences. Brief review of statistical methods and their computational implementation for studying time series analysis, spectral analysis, filtering methods, principal component analysis, orthogonal mode decomposition, equation-free methods for complex systems, compressive sensing, and image processing and compression.

- Objectives

-

How to recognize and solve numerically practical problems which may arise in your research. We will solve some serious problems using the full power of MATLAB's built in functions and routines. This class is geared for those who need to get the basics in scientific computing methods for data analysis. Many of today's major research methods for exploring data analysis will be covered: deep neural nets, equation-free modeling, model selection and information criteria, sparse regression, dynamic mode decomposition, principal component analysis, proper orthogonal decomposition, empirical mode decomposition etc. Applications will range from image processing to characterizing atmospheric dynamics.

- (1) Review of Statistics: (0 week)

We will begin with a brief review of statistical methods. The principles of statistics will be largely applied in a computational context for extracting meaningful information from data.

- (a) mean, variance, moments

- (b) probability distributions

- (c) significance testing, hypothesis testing

- (b) probability distributions

- (2)

Spectral and Time-Frequency Analysis: (4 weeks)

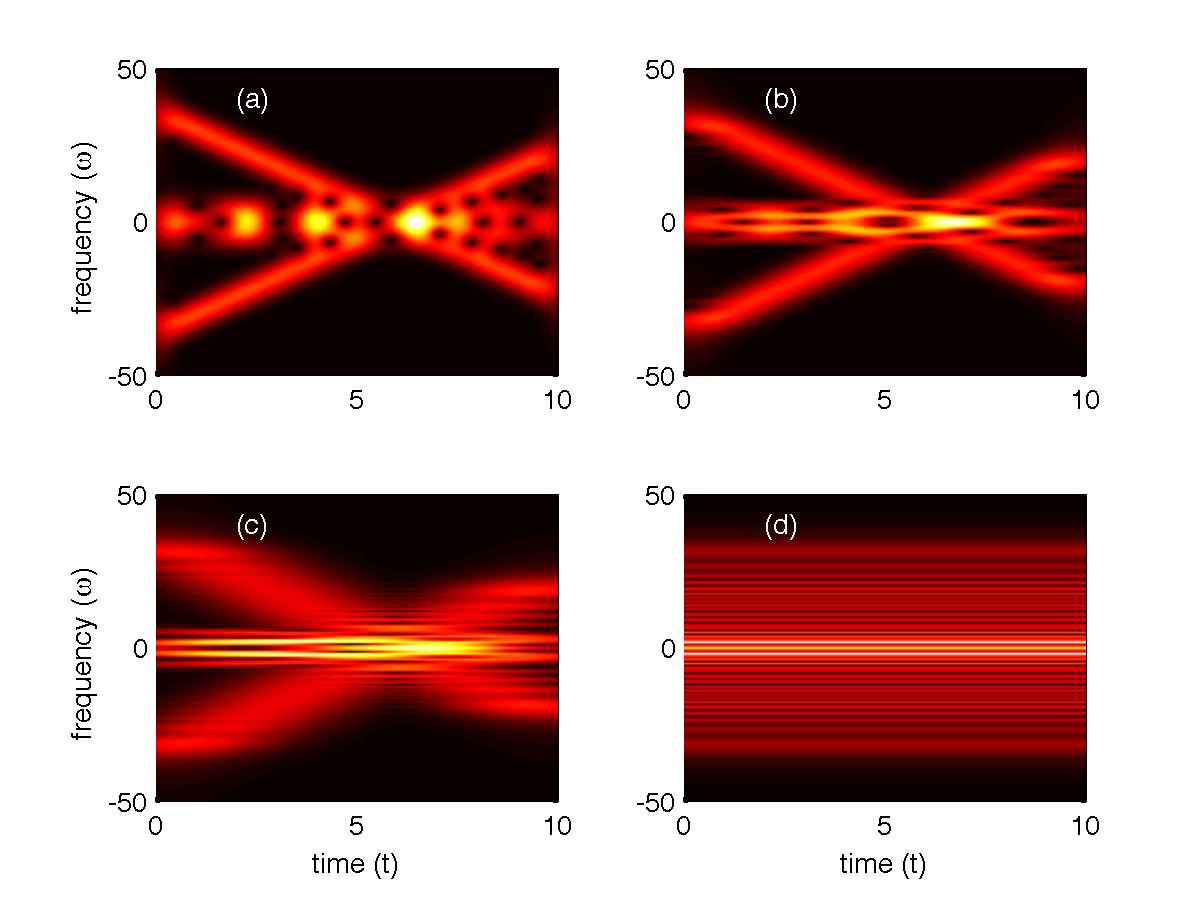

We will introduce the ideas of signal processing, filtering, time-frequency representations including wavelet expansions. Our application will be largely to problems in image processing, denoising and noise reduction.

- (a)

digital signal processing

- (b) noise reduction and filtering

- (c) image processing and face recognition

- (d) time-frequency methods and wavelets

- (e) sparse representation and compressive sensing

- (b) noise reduction and filtering

- (3) Dimensionality Reduction and Equation-Free Techniques: (6 weeks)

These methods are practical attempts to reduce the dimensionality of the data as well as infer statistically meaningful trends in what otherwise appears to be noisy data.

- (a) Principal Component Analysis (PCA)

- (b) Proper Orthogonal Decomposition (POD)

- (c) Singular Value Decomposition (SVD)

- (d) Dynamic Mode Decomposition (DMD)

- (e) Model Reduction

- (f) Multi-scale equation-free methods

- (g) Clustering and classification

- (b) Proper Orthogonal Decomposition (POD)

- MAXIMUM NUMBER OF PAGES: 6 (plus additional pages for attaching your MATLAB code: Appendix B)

- Title/author/abstract Title, author/address lines, and short (100 words or less) abstract. (It is not to be a separate title page!)

- Sec. I. Introduction and Overview

- Sec. II. Theoretical Background

- Sec. III. Algorithm Implementation and Development

- Sec. IV. Computational Results

- Sec. V. Summary and Conclusions

- Appendix A MATLAB functions used and brief implementation explanation

- Appendix B MATLAB codes

I will grade based upon how completely you solved the homework as well as neatness and little things like: did you label your graphs and include figure captions.EACH HOMEWORK IS WORTH 10 POINTS. Five points will be given for the overall layout, correctness and neatness of the report, and five additional points will be for specific things that the TAs will look for in the report itself. We will not tell you these things ahead of time as a good and complete report should have them as part of the explanation of what you did. For example, in the first homework, the TAs may look to see if you talked about the fact that you must rescale the wavenumbers by 2*pi/L since the FFT assumes 2*pi periodic signals. This is a detail that is important, so it would be expected you would have it. If you do, you get the point, if not, then you miss a point.

NOTE: The report does not have to be long. But it does have to be complete.

NOTE 2: This report is not for me, it is for you! Specifically, for the future you. So write a nice report so that you could reproduce the results if you need the methods addressed here in another year or more.

A few things should be kept in mind when generating your reports:

- 1. Use a professional grade word processor (Latex or MSword, for example)

- 2. For equations: Latex already does a nice job, but in Word, use Microsoft Equation Editor 3.0

- 3. Label your graphs. Include brief figure captions. Reference the figure in the text with a more detailed account of the figure.

- 4. Figures should be set flush with the top or bottom of a page.

- 5. Label all equations.

- 6. Provide references where appropriate.

- 7. All coding should be shuffled to Appendix A and B. Reference it when necessary.

- 8. Always remember: this report is being written for YOU! So be clear and concise.

- 9. Spellcheck.

- Title/author/abstract Title, author/address lines, and short (100 words or less) abstract. (It is not to be a separate title page!)

Syllabus

Grading

Your course grade will be determined entirely from your homework (100%). There will be 6 homeworks over the quarter.

Each of the homework sets will be part of your final grade. During the quarter, you will receive six homeworks that you will turn in via the class DROPBOX. These six homeworks are equally weighted and worth 100% of your grade. This homework should be written as if it were an article/tutorial being prepared for submission. I expect a high level of professionalism on these reports. The following is the expected format for homework submission: